Load the text file in batches - splitting the text file between the suspected corruption of data or invalid data entry at the 34-millionth record!Īnd this is where GSplit comes to the rescue! Surely, loading the plain text file and inspecting the problem with the 34-millionth row would seem like the easiest solution!

#Gsplit 3 software

Microsoft Excel cannot load the file as it exceeds the limits of rows! Even Microsoft Access could not load the file as the row count and raw data contents exceeds the maximum file size that Access can load.Įven the developer friendly Notepad++ software could not load the data. Since the text file is so huge, Notepad could not open it. Now data corruption is immediately a bad topic, and as with anyone working with databases, we prefer all our records intact! Observing that the copying process always ends at the 34-millionth record, I determine that there must be invalid characters or data corruption somewhere in the 34-millionth record and possibly onwards from that point.

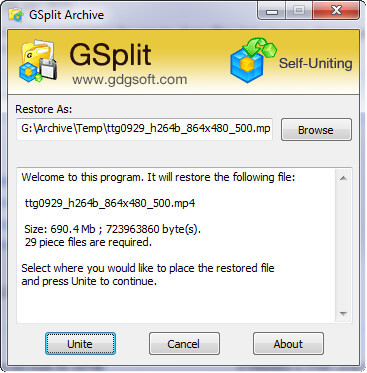

Also, a file this large hits the memory limitations of Office Publishing Apps like Excel and Access. While this typical behavior works fine for most file types, a file size this big chokes the already running SQL database and competes for RAM memory. This crash happens because typical programs like Notepad, Microsoft Excel, Microsoft Access, and even SQL Server attempts to take the 2.4 gigabyte text file and immediately load it into ram and memory, and from there copy the contents to the actual database or display it on screen. Adding complexity to the task is that I get repetitive and unexplained errors after SQL has attempted to copy the 34-millionth record! SQL just crashes at the 34 million mark and aborts the copy. Taking this large file and importing it to the database takes a very very long time (at least 40 minutes of plain waiting!). I will share with you the tutorials that I have found to be helpful in splitting text/csv files with GSplit.Ī large text file of 2.4 Gigabytes needs to be loaded into the SQL Database. I would like to share the tutorial below as I have personally encountered the critical need to split and restore a large text file of 44 million database records and restore it! Of course, next to this task is the complication of choking the production database as having it retrieve all 44 million records gobbles up the CPU and memory!! Wait a few more minutes while it does the import of the large database dump of 44 million records and it crashes the entire database! GSplit includes a lot of customization features for easily and safely splitting your files. It also creates a Self-Uniting program that automatically restores the original file with no requirement.

#Gsplit 3 free

GSplit is a powerful and free file splitter that lets you split your large files into a set of smaller files called pieces.

#Gsplit 3 how to

How to split large Code, Text, and Database files with GSplitĪre you a web developer working daily with hundreds to thousands of lines of code? Are you a web blogger and needing to backup, import and export your Wordpress databases? Are you an Analyst, needing to occasionally work with hundreds of megabytes of data exports an databases? Are you a Database Administrator and need to manage your ever growing database records?Įver had the nightmare of growing these data files so big that data export, import, and even recovery is impossible due to file size, and application limits!?